TL;DR

SPHERE studies why MoE policies lose their ability to adapt in continual RL. It shows that learning updates collapse into too few directions, then keeps expert features diverse so later tasks remain learnable.

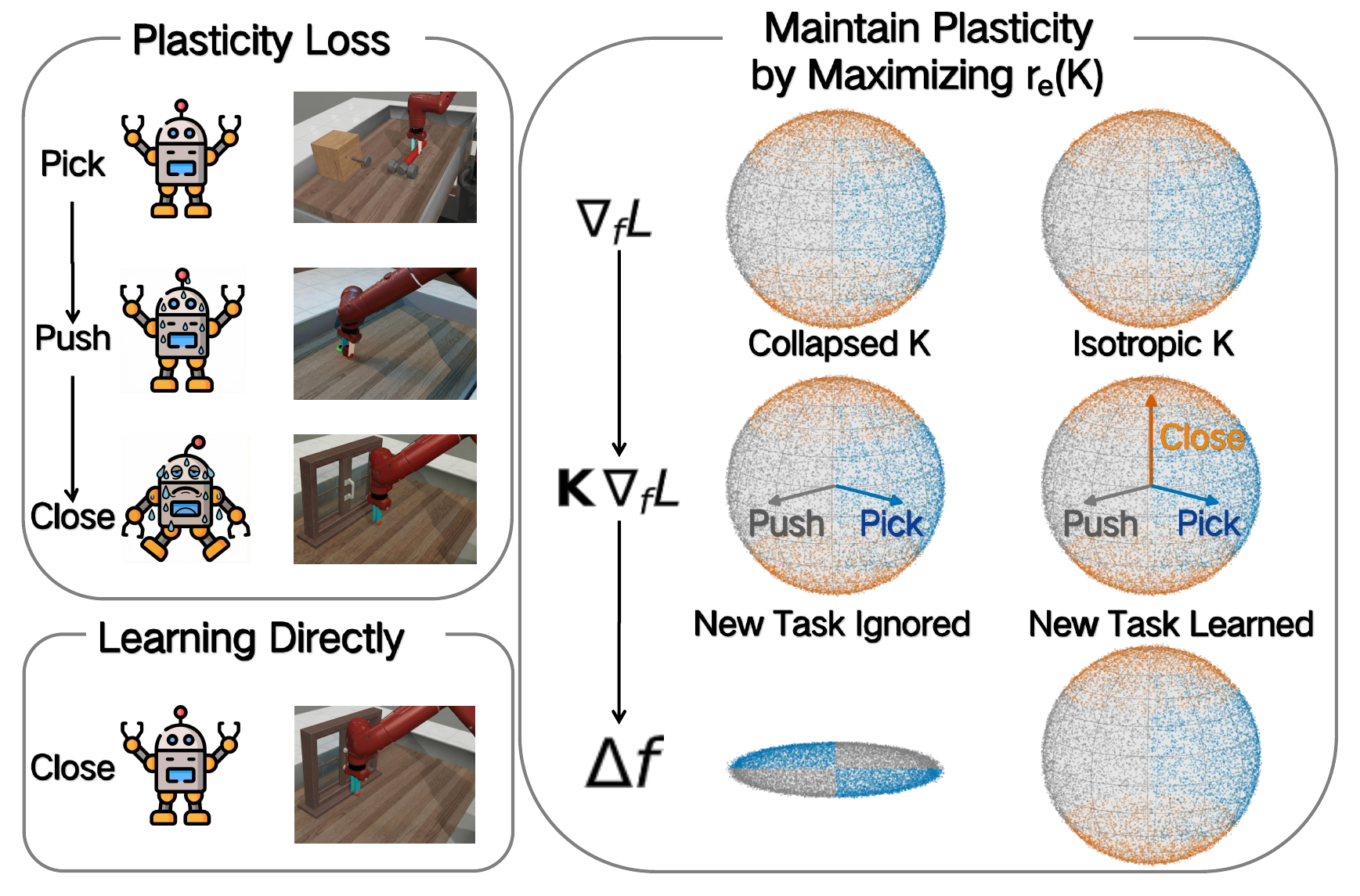

Mechanism: Why Policies Stop Learning in Continual RL

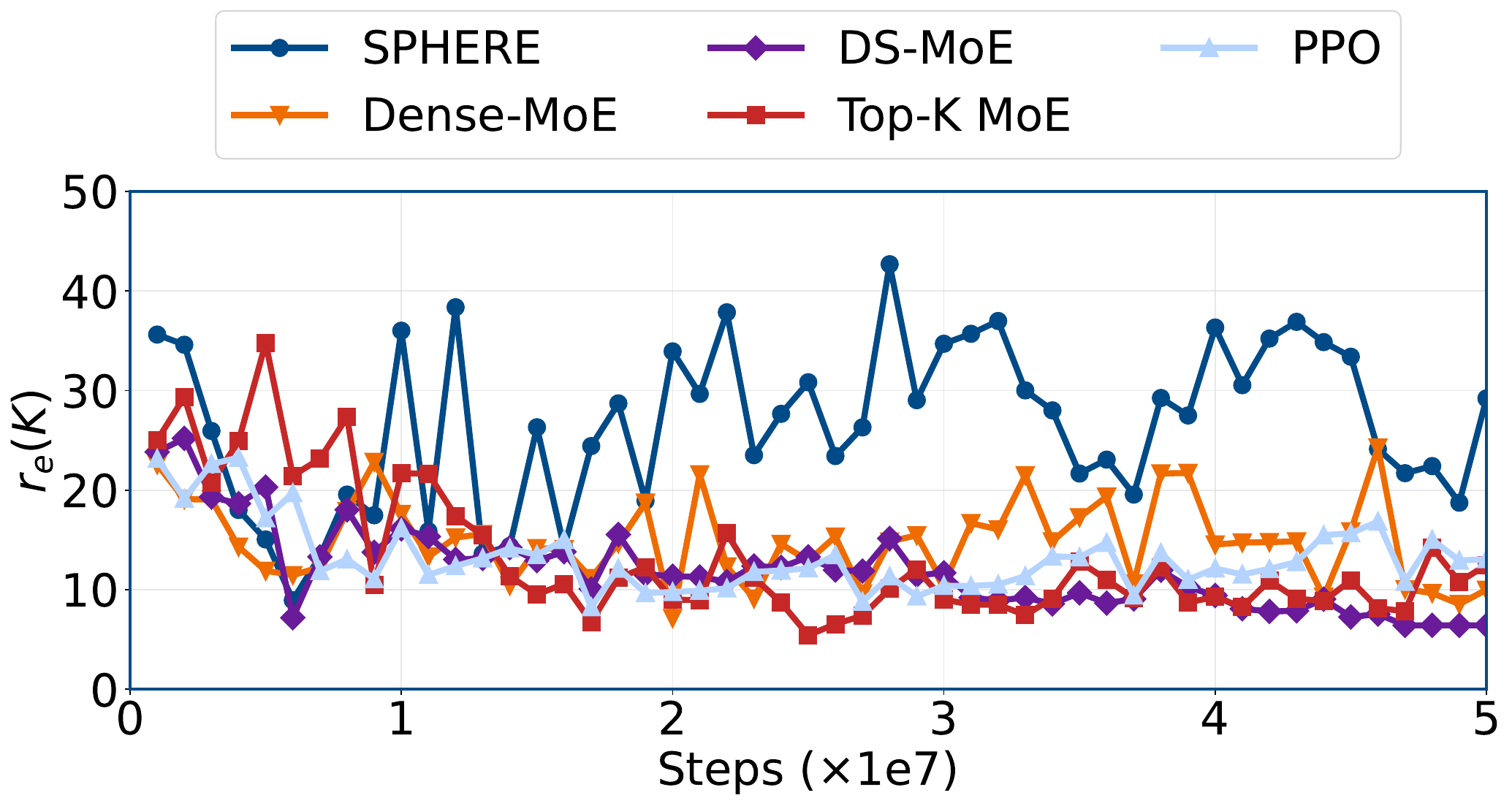

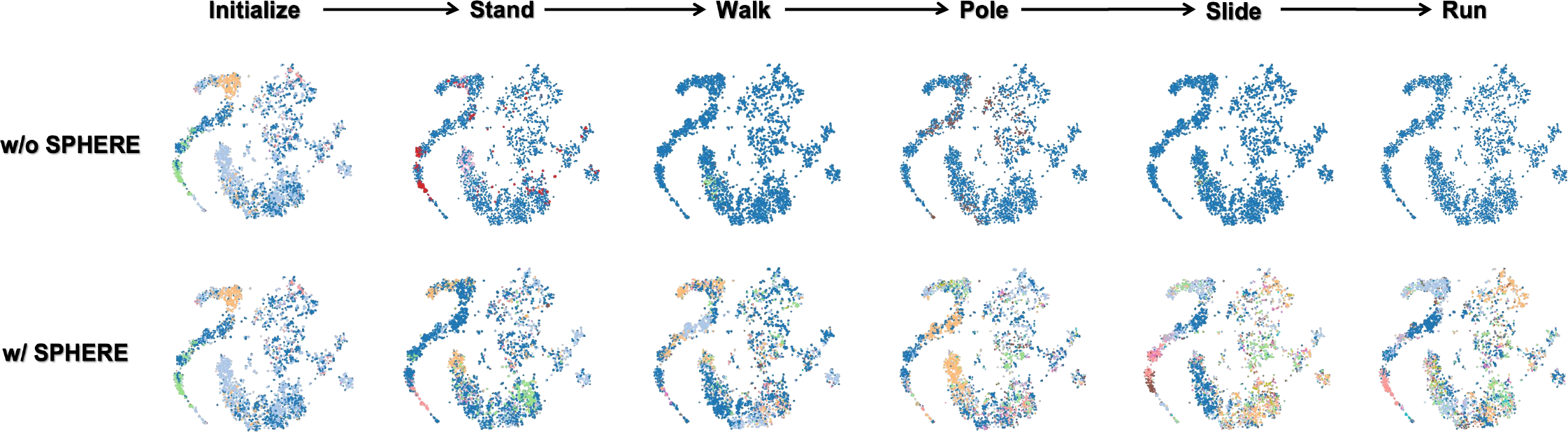

The mechanism story starts with the observed learning slowdown, connects it to collapsed update directions, and then shows how SPHERE keeps those directions more diverse.

Update Geometry: Collapse vs. SPHERE

The visualization makes the diagnosis concrete. A unit sphere of possible gradient directions becomes an ellipsoid after multiplication by the eNTK matrix $\mathbf{K}$. When $\mathbf{K}$ becomes low-rank, one axis shrinks toward zero and the ellipsoid degenerates toward a near-plane or line; SPHERE keeps the spectrum more isotropic.

Top row ($\nabla_f L$) shows the input sphere of directions; bottom row ($\mathbf{K}\nabla_f L$) shows the stretching process.

Interactive 3D visualization comparing baseline Top-K MoE and SPHERE update geometry across HumanoidBench tasks.

This animation is grounded in real Fig. 1 exports: the top-3 eigenvalues of $\mathbf{K}$ shape the ellipsoid for each task, and gradient-direction samples are projected into the same 3D subspace.

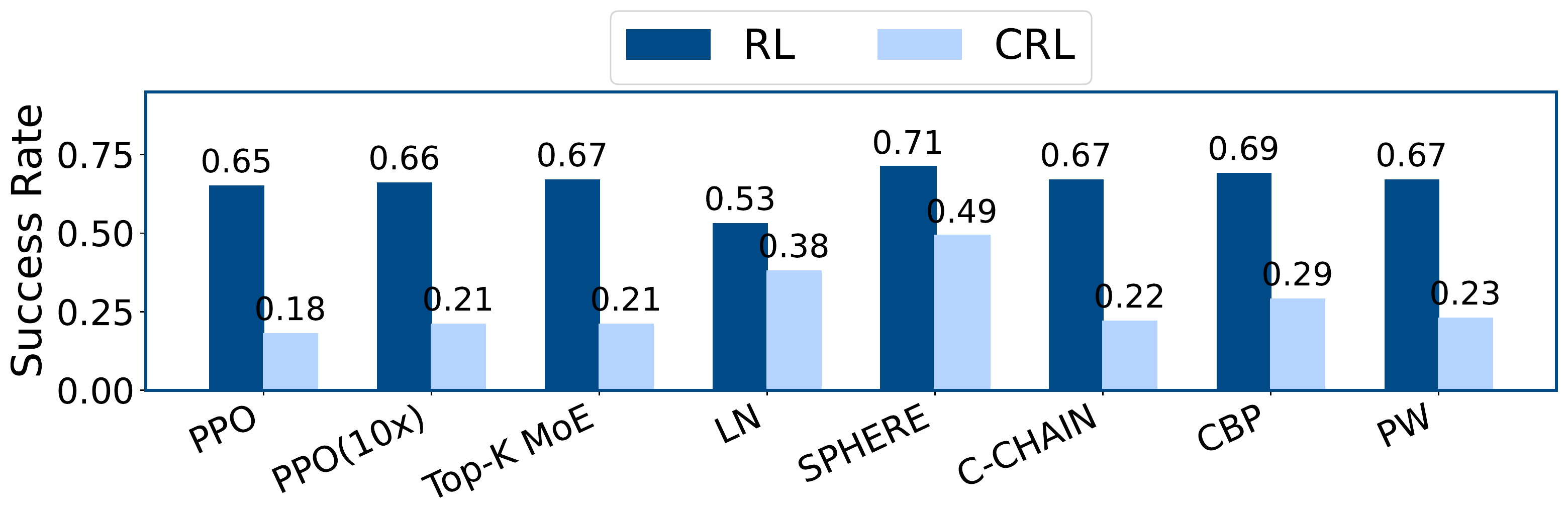

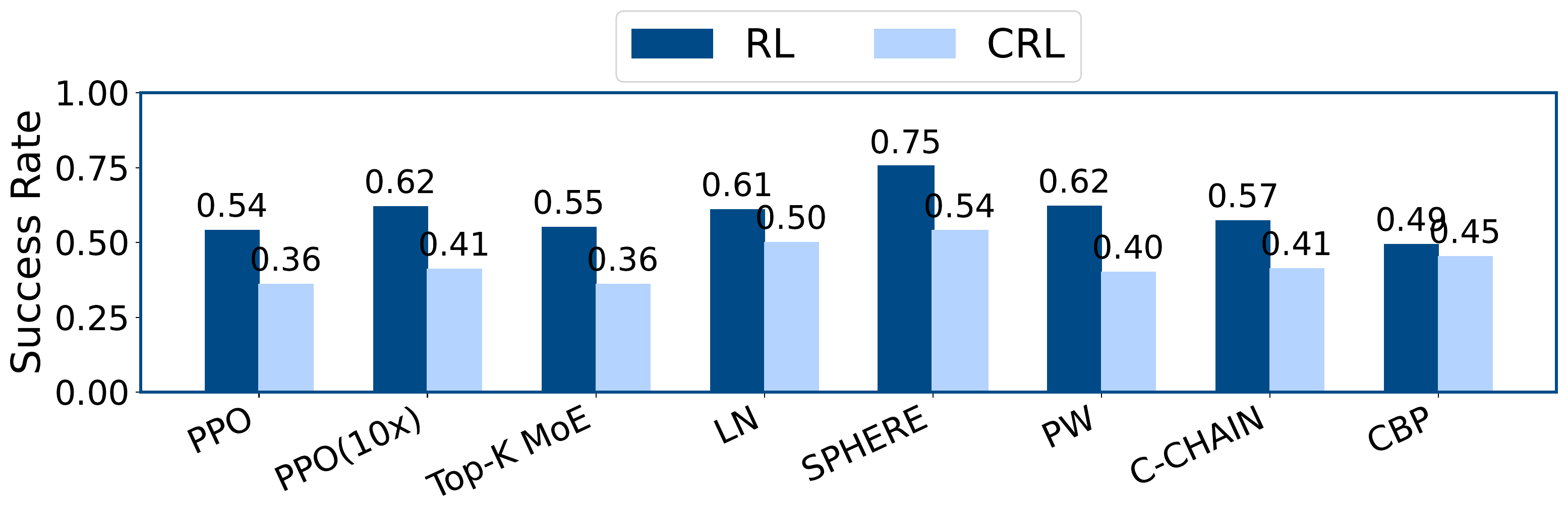

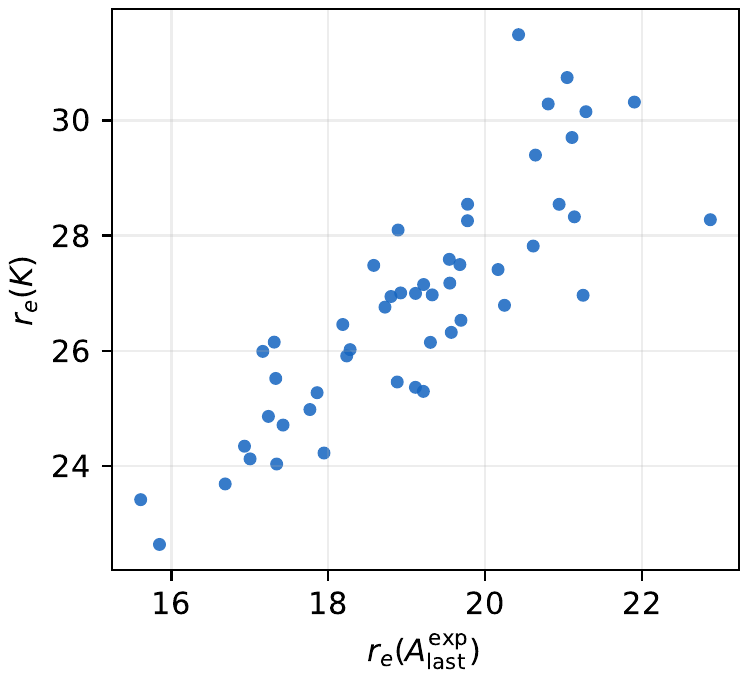

Experiments & Analysis

The story follows the paper: first the failure mode, then the spectral diagnosis, then performance on MetaWorld and HumanoidBench, followed by the design ablation and feature proxy.

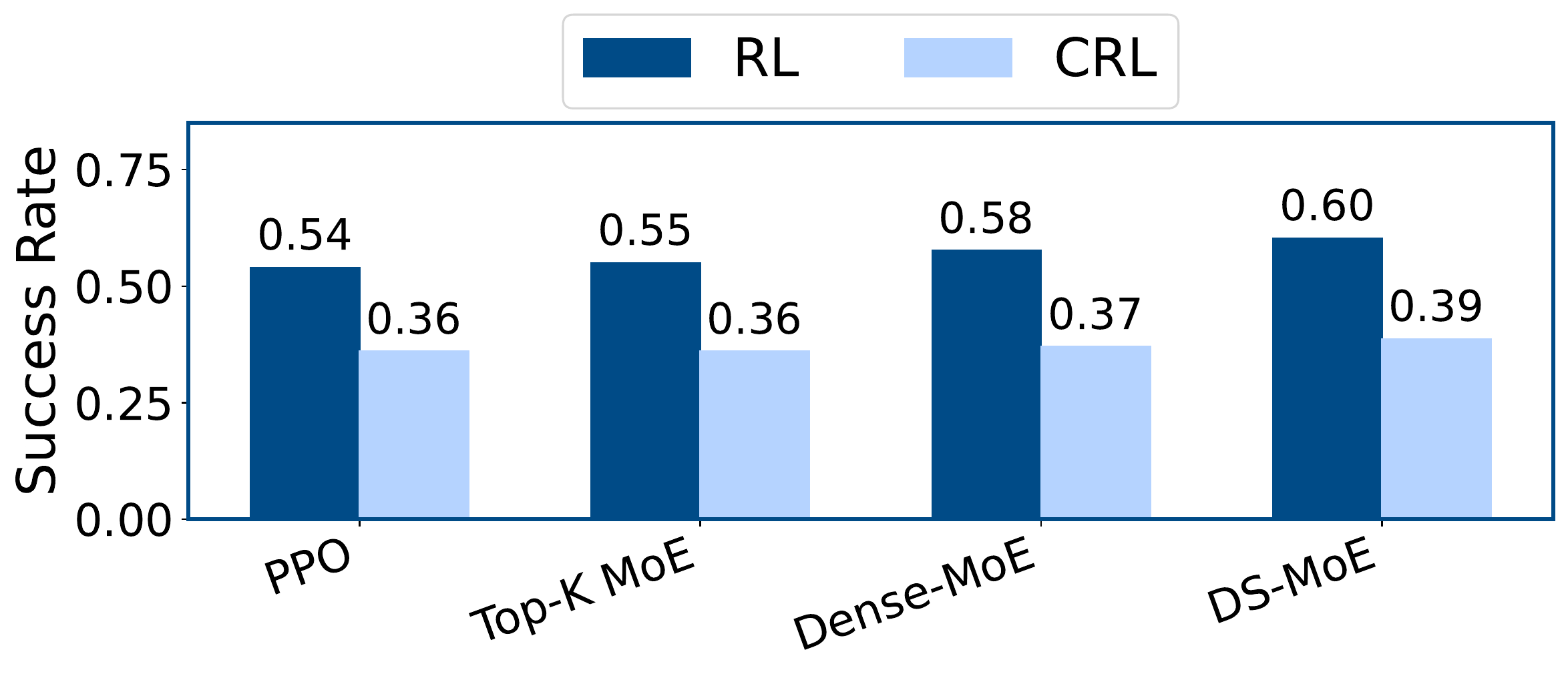

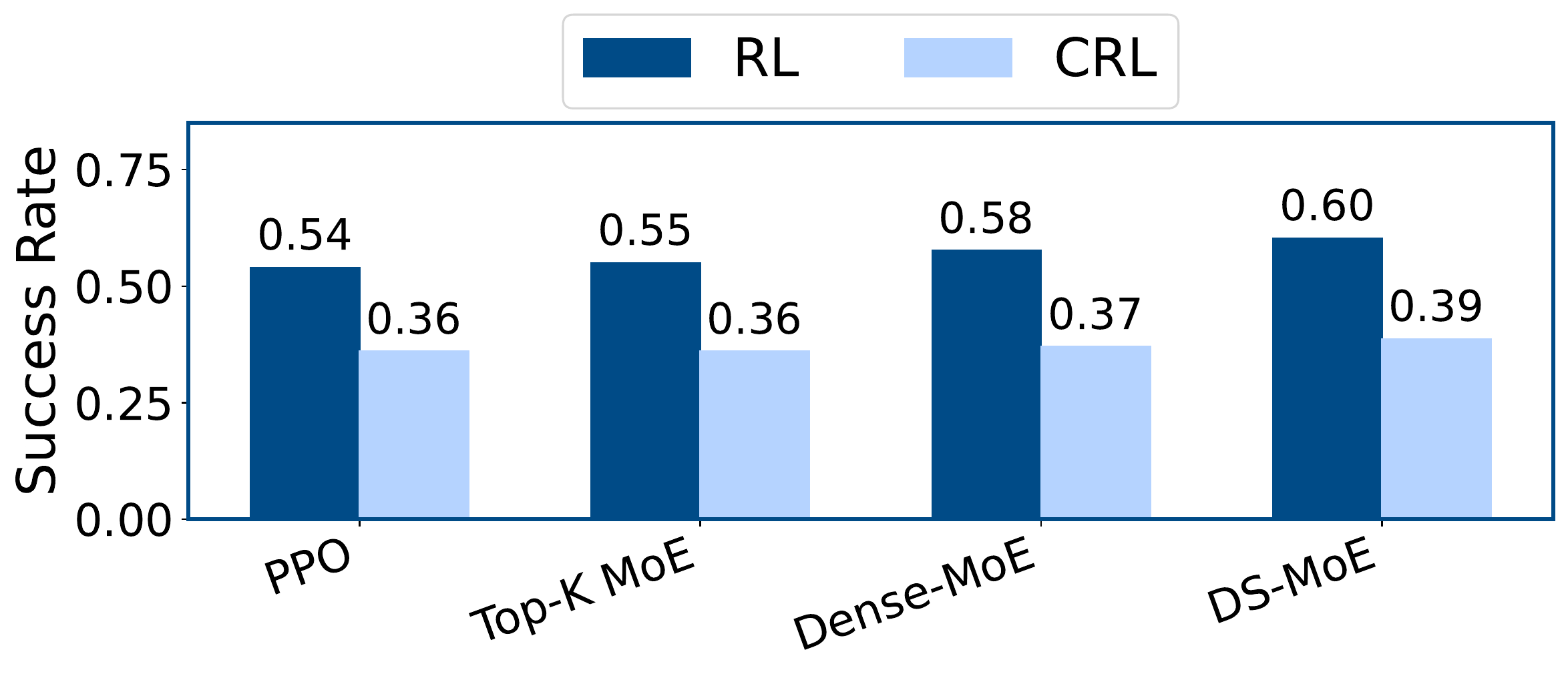

| Variant | Average success |

|---|---|

| w/o SPHERE | 0.36 ± 0.08 |

| w/ SPHERE | 0.54 ± 0.12 |

| All hidden expert layers | 0.42 ± 0.07 |

| Per-expert loss sum | 0.40 ± 0.08 |

| Gradient-factor regularization | 0.43 ± 0.09 |

BibTeX

@inproceedings{luo2026sphere,

title = {SPHERE: Mitigating the Loss of Spectral Plasticity in Mixture-of-Experts for Deep Reinforcement Learning},

author = {Luo, Lirui and Zhang, Guoxi and Xu, Hongming and Fang, Cong and Li, Qing},

booktitle = {Proceedings of the 43rd International Conference on Machine Learning},

year = {2026}

}